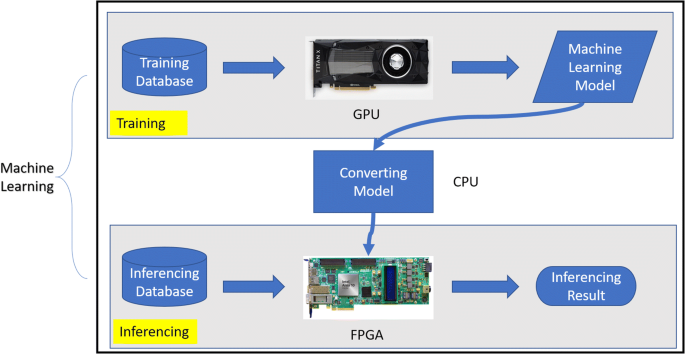

A hybrid GPU-FPGA based design methodology for enhancing machine learning applications performance | SpringerLink

Appendix C: The concept of GPU compiler — Tutorial: Creating an LLVM Backend for the Cpu0 Architecture

Parallelizing across multiple CPU/GPUs to speed up deep learning inference at the edge | AWS Machine Learning Blog

Is it possible to convert a GPU pre-trained model to CPU without cudnn? · Issue #153 · soumith/cudnn.torch · GitHub

Run multiple deep learning models on GPU with Amazon SageMaker multi-model endpoints | AWS Machine Learning Blog

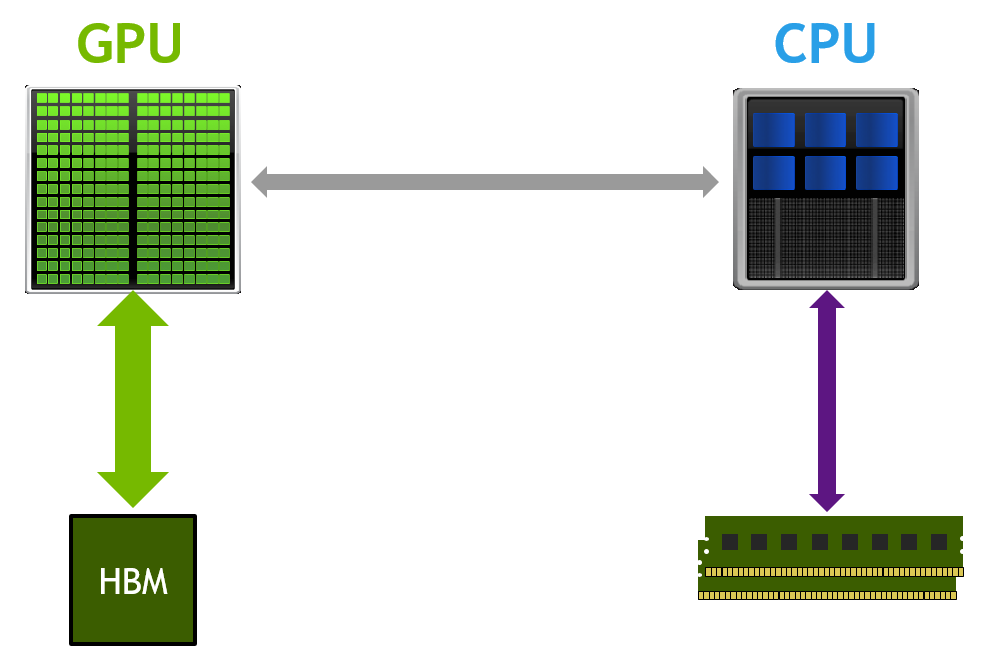

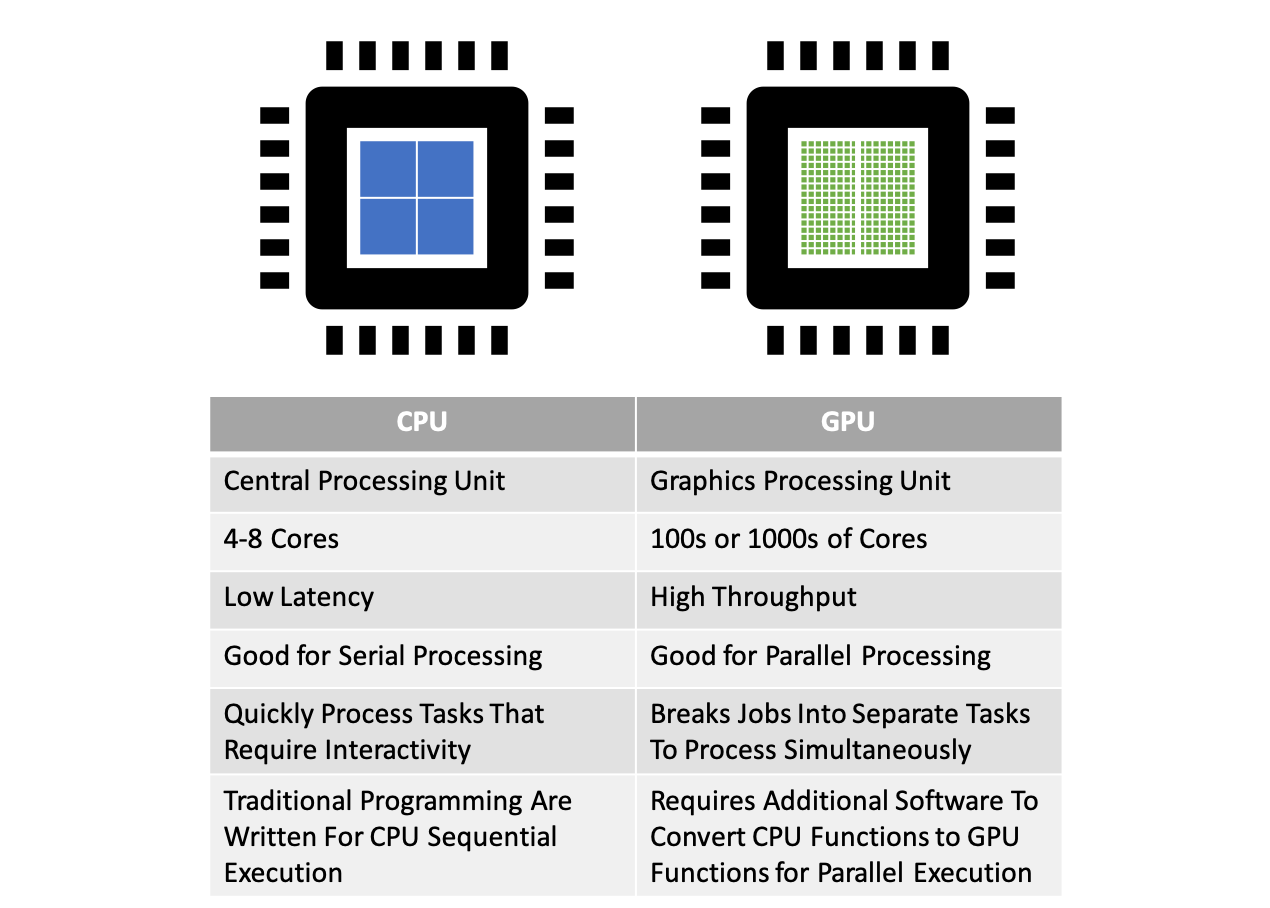

Parallel Computing — Upgrade Your Data Science with GPU Computing | by Kevin C Lee | Towards Data Science

Optimizing I/O for GPU performance tuning of deep learning training in Amazon SageMaker | AWS Machine Learning Blog